A story of Lazy Loading File System Operations for better dev system performance

In this blog post I want to share a story of a performance bottleneck using the filesystem that we experienced in our development setup.

In the Tideways backend, we have a simple homegrown database migration tool that scans a directory for .sql files and applies them if not already done. It is a very old piece of code that I used since before the times of doctrine/migrations. It is much simpler but works for us.

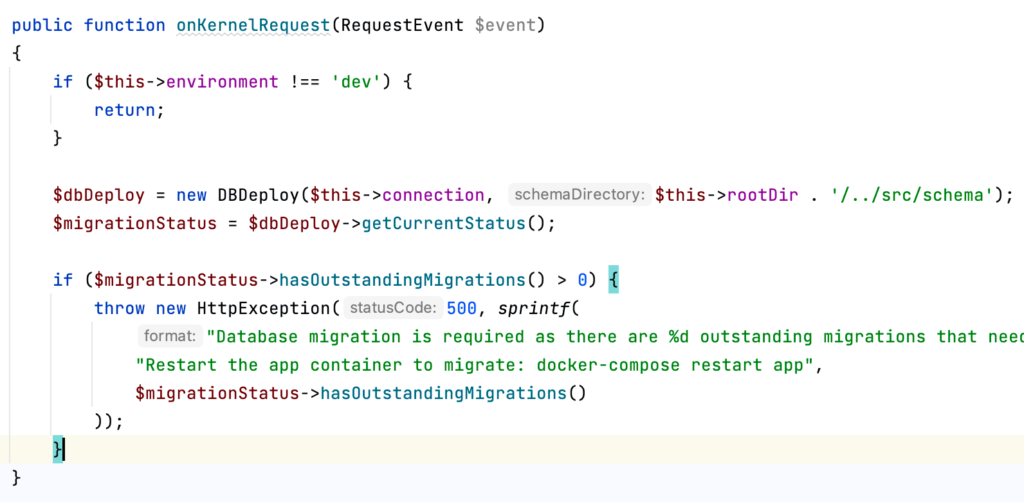

To avoid the development system running with an outdated schema after git pulling commits with SQL schema changes, this database migration tool checks for missing migrations to apply on every single request (in the development environment).

This check only requires 1 SQL select to fetch the already applied changes from the changelog table and one glob to fetch the list of all schema files. Should be quick and straightforward! Right? The high-level code looks something like this:

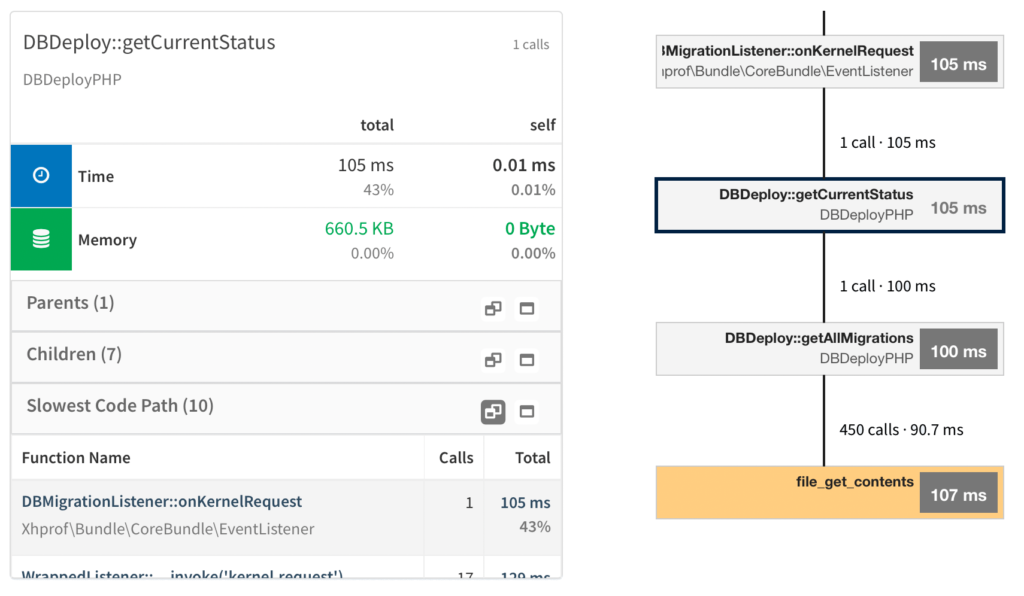

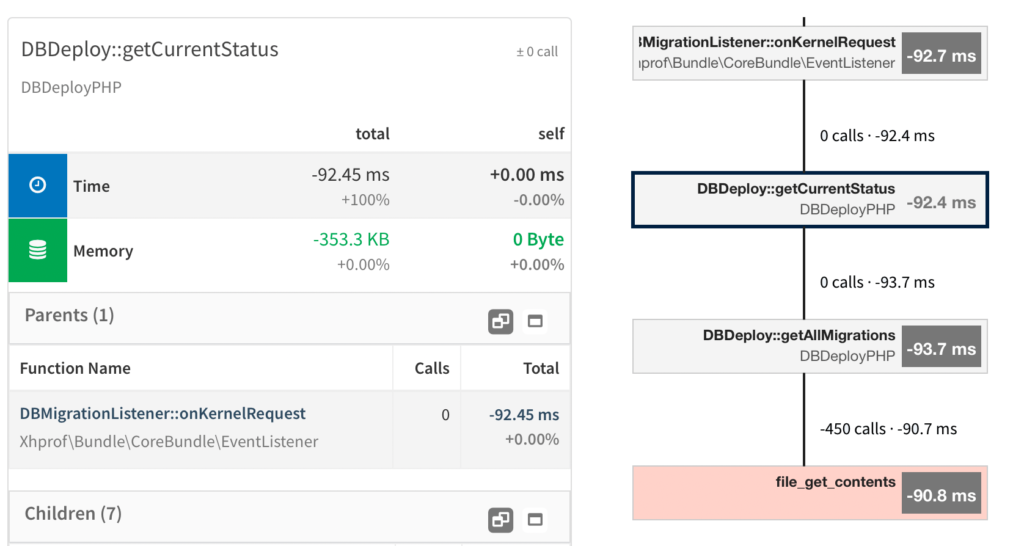

Looking at the callgraph however, shows a bottleneck in file_get_contents:

We have 450 SQL migrations already, and for checking the current status the content of each SQL file is loaded into memory in DbDeploy::getAllMigrations().

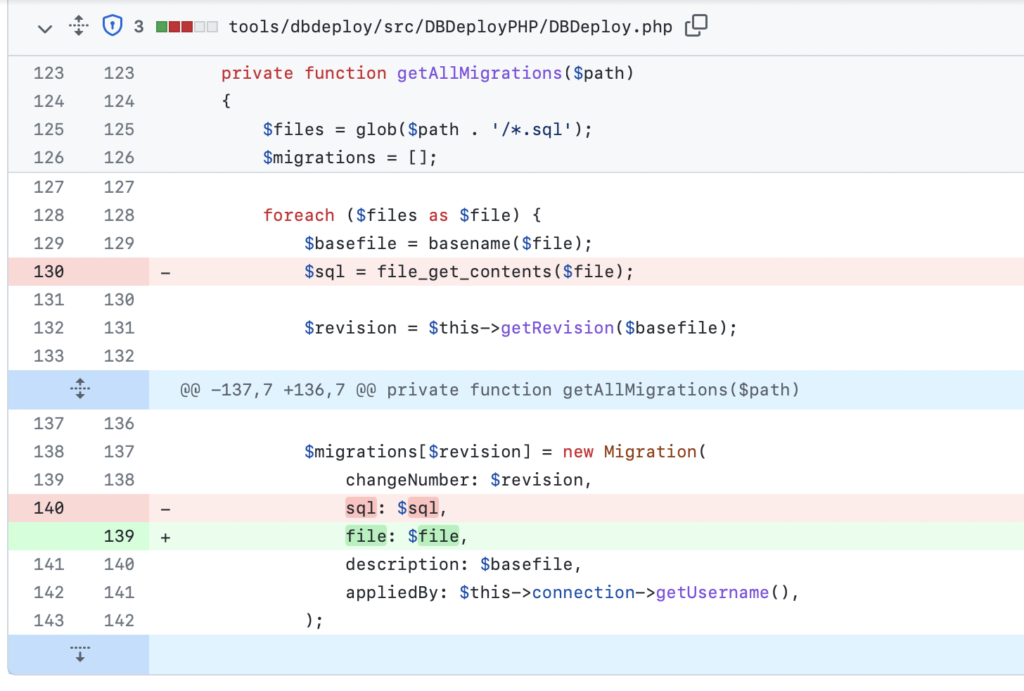

The MigrationStatus::hasOutstandingMigrations() method shown above does not need the SQL file contents, so we can refactor the code to call file_get_contents at the last possible moment.

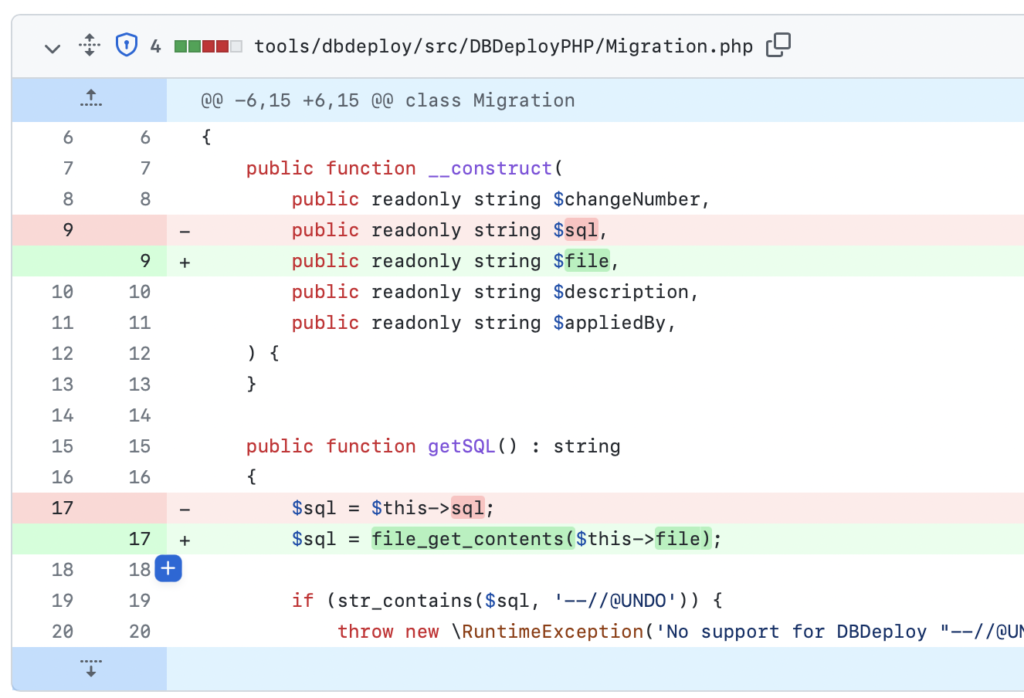

This lazy loading pattern is widespread when it comes to database operations but also makes sense for the file operations here. The virtual filesystem of our development environment, which makes file operations considerably slower than with a non-virtual filesystem, adds extra time to these operations.

After refactoring away the file_get_contents calls from the DbDeploy::getAllMigrations() method into Migration::getSQL() that is only called directly before executing the changes, we see improvements.

A 90ms improvement by comparing a callgraph before and after the change:

- File I/O drops from 128ms to 49ms

- Overall response drops from 246ms to 154ms

file_get_contentsduration improves by 90ms

A nice gain, considering it runs on every request in the development environment!